For most of our work where we track the motion of people, we use Microsoft’s Kinect 2.0. We usually use to receive a greyscale depth map image and analyze that with some form of blob detection. Below you can find the basic set up for a Kinect and an example using the processing library we use, KinectPV2, to receive the various types of depth information

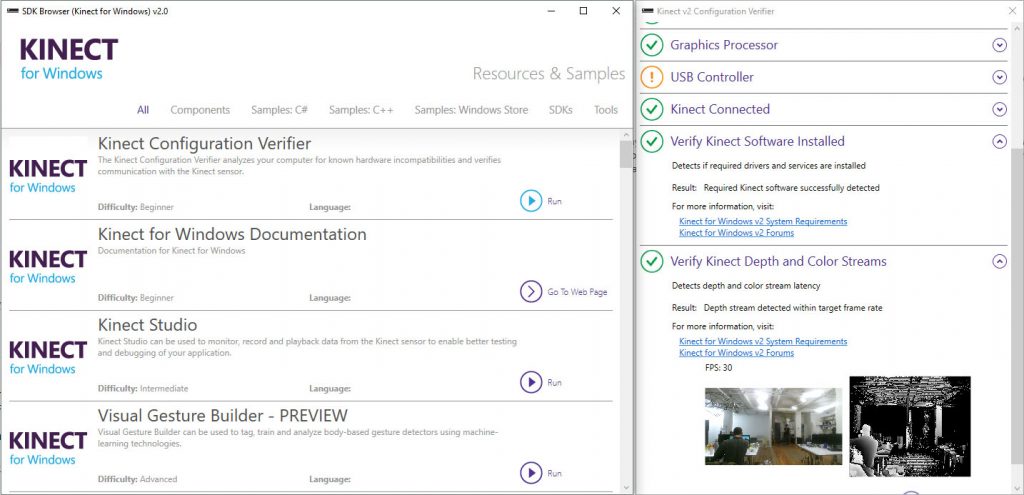

You can download the SDK for Kinect 2.0 here. Once the SDK is installed open the SDK browser and run the Kinect Configuration Verifier. Open the tab listed as Verify Kinect Depth and Color Streams to check that the depth camera is working properlyWE

The processing code below is used to retrieve and display the point cloud data from a Kinect’s IR sensor. The data is retrieved using the KinectPV2 library. The code below uses the example that comes with the library as its base. We added a couple of sliders to control the max and min depth that is retrieved and camera functionality so you can rotate around the point cloud. We also used an image of a color spectrum to map the depth of the points to a color. You can make your own or just download the one we are using here and place it in the processing data folder.

import java.nio.*;

import KinectPV2.*;

import controlP5.*;

ControlP5 cp5;

KinectPV2 kinect;

int vertLoc;

//transformations

float a = 1.9;

int zval = -200;

float scaleVal = 1;

//value to scale the depth point when accessing each individual point in the PC.

float scaleDepthPoint = 100.0;

//Distance Threashold

int maxD = 4000; // 4m

int minD = 0; // 0m

int Ztranslate = 0;

//openGL object and shader

PGL pgl;

PShader sh;

//VBO buffer location in the GPU

int vertexVboId;

int oldx = mouseX;

int oldy = mouseY;

float rotx = PI;

float roty = 0;

float zcam = 500;

int initialize = 0;

PImage img;

public void setup() {

size(1280, 720, P3D);

img = loadImage("spectrum.jpg");

kinect = new KinectPV2(this);

kinect.enableDepthImg(true);

kinect.enablePointCloud(true);

kinect.setLowThresholdPC(minD);

kinect.setHighThresholdPC(maxD);

kinect.init();

sh = loadShader("frag.glsl", "vert.glsl");

cp5 = new ControlP5(this);

cp5.addSlider("minD")

.setPosition(40,40)

.setRange(0,4000)

.setSize(240,20)

.setValue(0)

.setColorForeground(color(20,200,200))

.setColorLabel(color(255))

.setColorBackground(color(70,70,70))

.setColorValue(color(0,0,0))

.setColorActive(color(0,255,255))

;

cp5.addSlider("maxD")

.setPosition(40,80)

.setRange(0,14000)

.setSize(240,20)

.setValue(4000)

.setColorForeground(color(20,200,200))

.setColorLabel(color(255))

.setColorBackground(color(70,70,70))

.setColorValue(color(0,0,0))

.setColorActive(color(0,255,255))

;

cp5.addSlider("Ztranslate")

.setPosition(40,120)

.setRange(0,1000)

.setSize(240,20)

.setValue(0)

.setColorForeground(color(20,200,200))

.setColorLabel(color(255))

.setColorBackground(color(70,70,70))

.setColorValue(color(0,0,0))

.setColorActive(color(0,255,255))

;

PGL pgl = beginPGL();

IntBuffer intBuffer = IntBuffer.allocate(1);

pgl.genBuffers(1, intBuffer);

//memory location of the VBO

vertexVboId = intBuffer.get(0);

endPGL();

}

public void draw() {

background(0);

pushMatrix();

cam();

//draw the depth capture images

//translate the scene to the center

translate(0,0,-Ztranslate);

scale(scaleVal, -1 * scaleVal, scaleVal);

// Threahold of the point Cloud.

kinect.setLowThresholdPC(minD);

kinect.setHighThresholdPC(maxD);

//get the points in 3d space

FloatBuffer pointCloudBuffer = kinect.getPointCloudDepthPos();

// obtain XYZ the values of the point cloud

stroke(0, 0, 0);

for(int i = 0; i < kinect.WIDTHDepth * kinect.HEIGHTDepth; i+=3){

float x = pointCloudBuffer.get(i*3 + 0) * scaleDepthPoint;

float y = pointCloudBuffer.get(i*3 + 1) * scaleDepthPoint;

float z = pointCloudBuffer.get(i*3 + 2) * scaleDepthPoint;

color cc = img.get(int(z/2),2);

stroke(cc); strokeWeight(2);

point(x, y, z);

}

//begin openGL calls and bind the shader popMatrix();

stroke(255, 0, 0);

text(frameRate, 50, height - 50);

}

void cam() {

int newx = mouseX;

int newy = mouseY;

translate(width/2, height/2,zcam);

rotateY(rotx); rotateX(roty);

translate(0,0,-30);

if ((mousePressed == true) && (mouseY > 200) ) {

rotx = rotx + (oldx-newx)/50.0;

roty = roty + (oldy-newy)/50.0;

}

if(initialize == 0){

rotx = rotx + (oldx-newx)/50.0;

roty = roty + (oldy-newy)/50.0;

initialize = 1;

}

oldx = newx;

oldy = newy;

}

void mouseWheel(MouseEvent event) {

float e = event.getCount();

zcam = zcam - e*5;

}